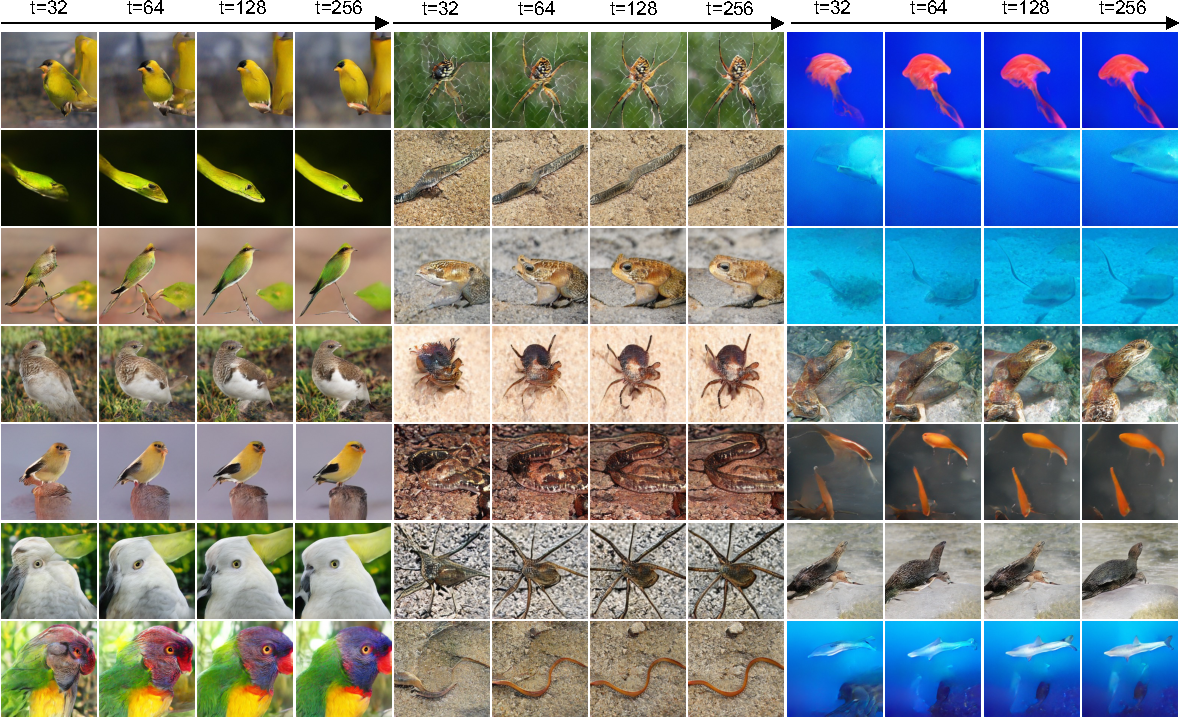

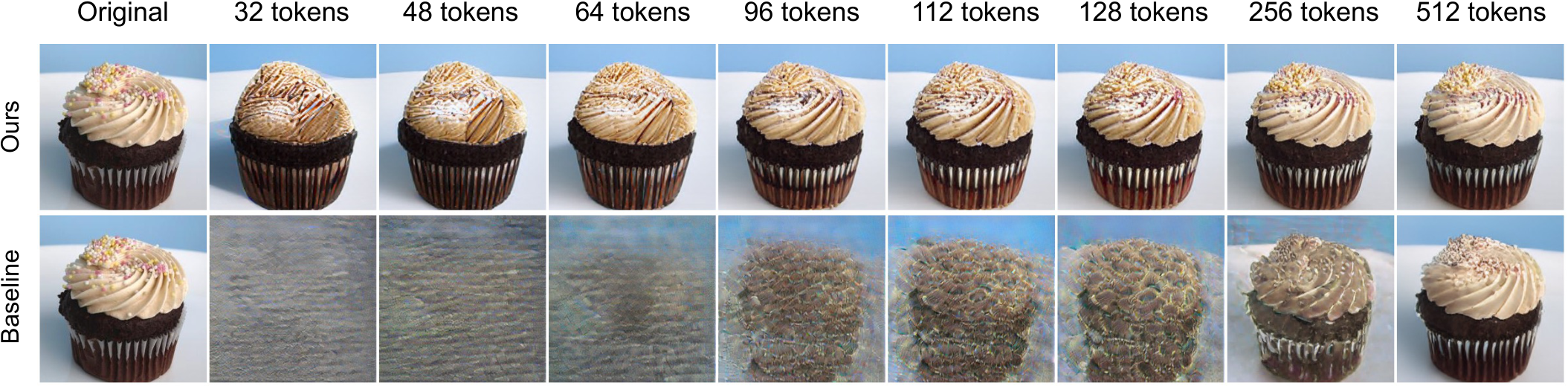

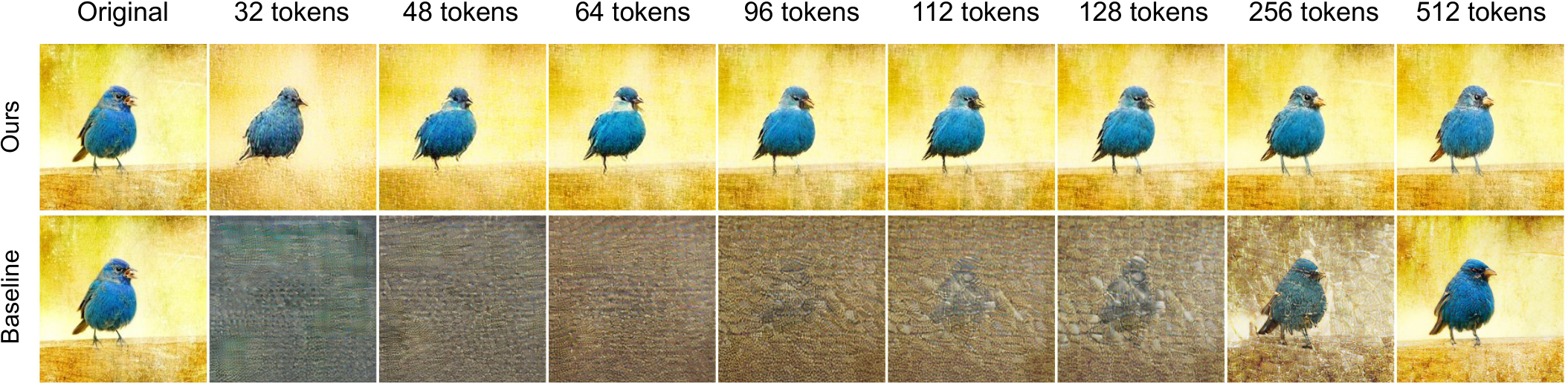

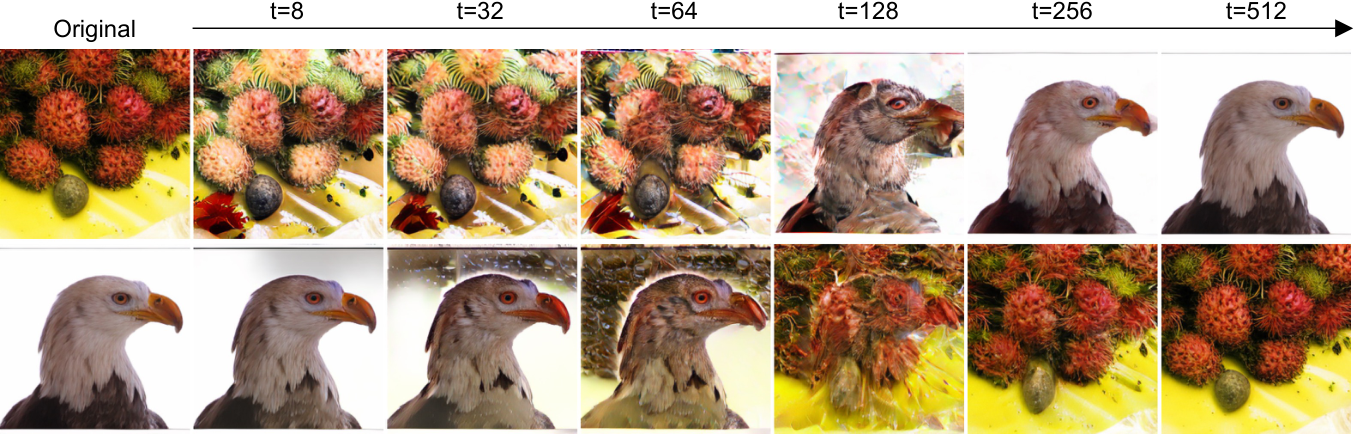

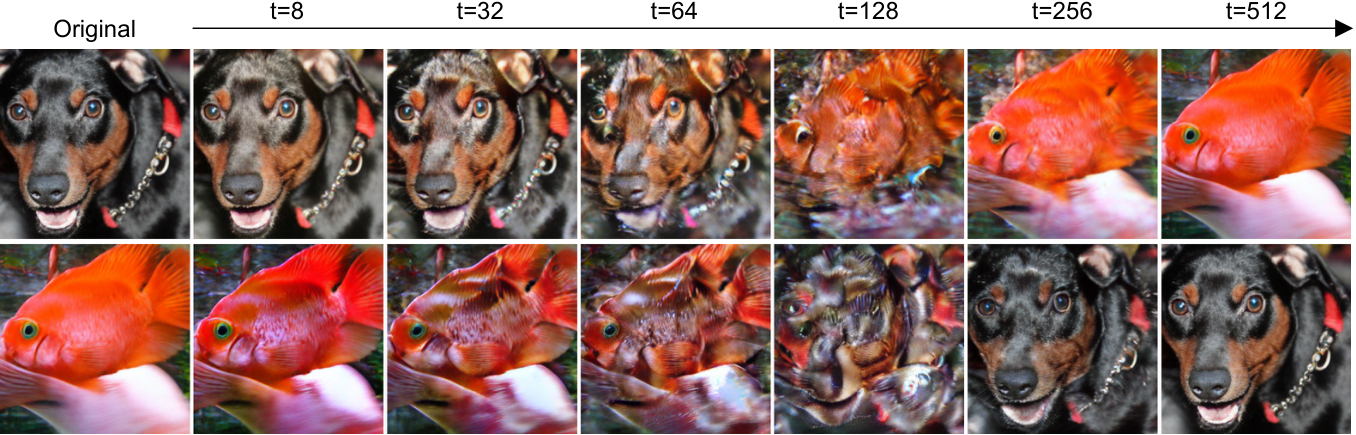

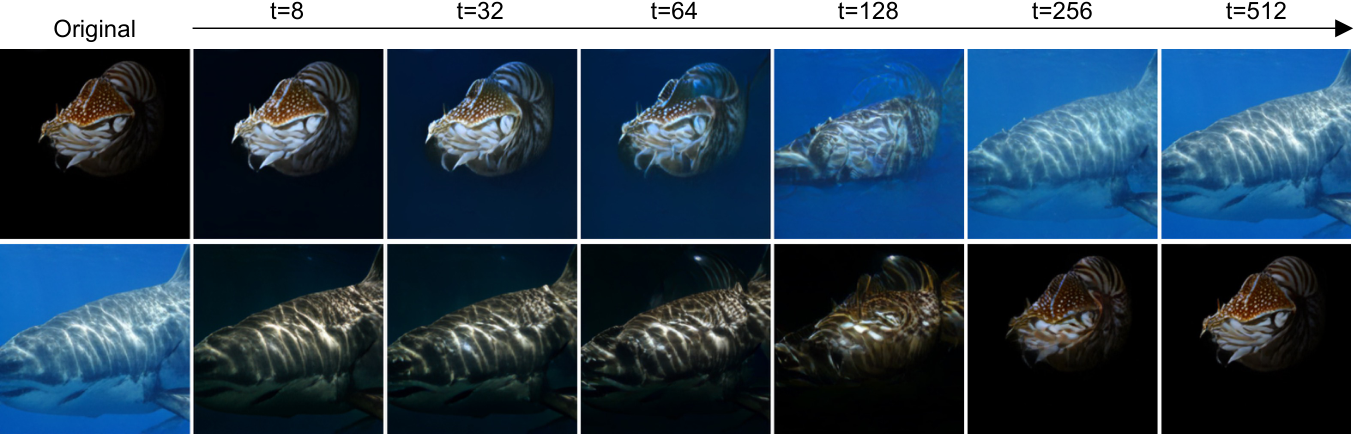

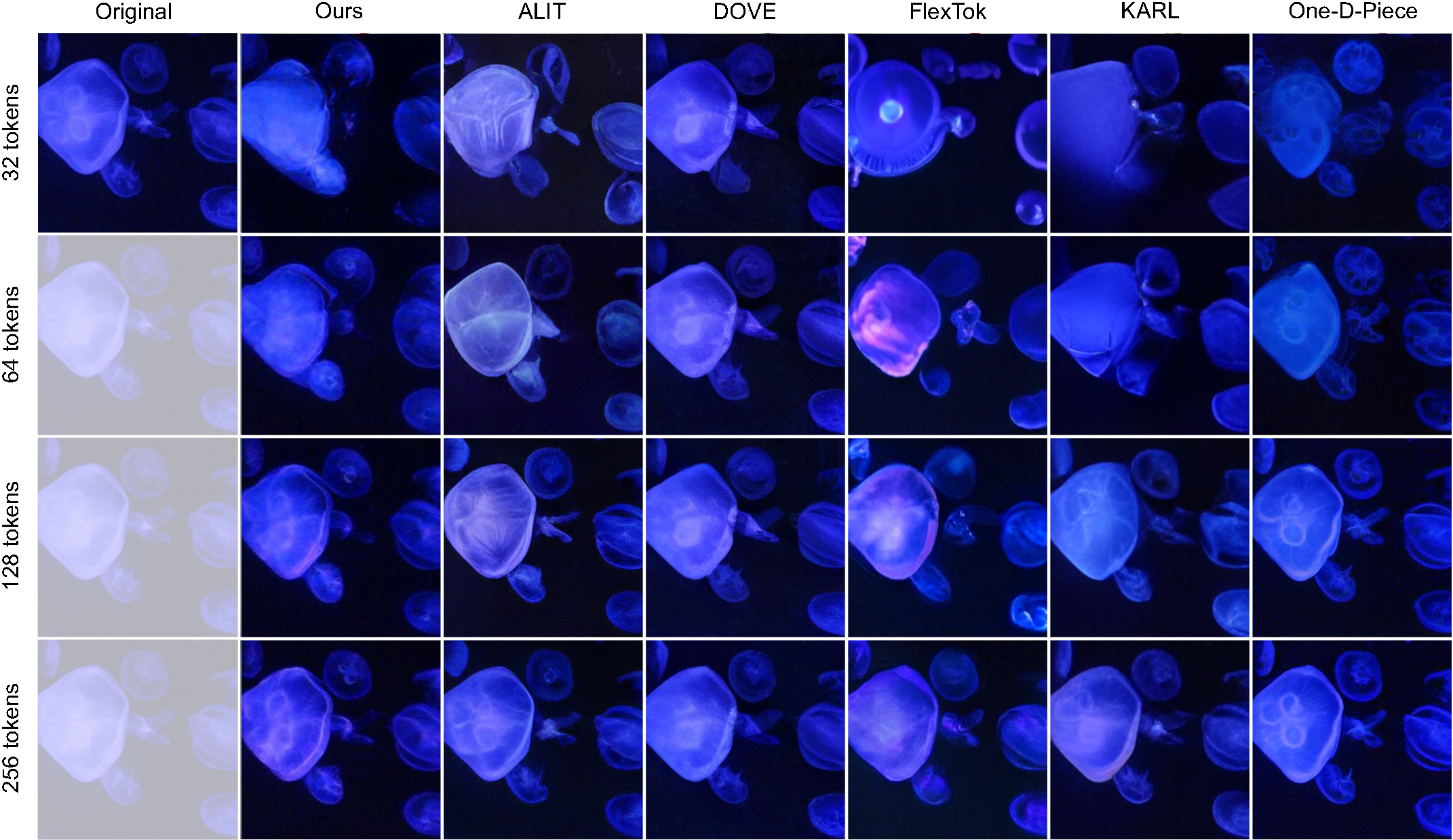

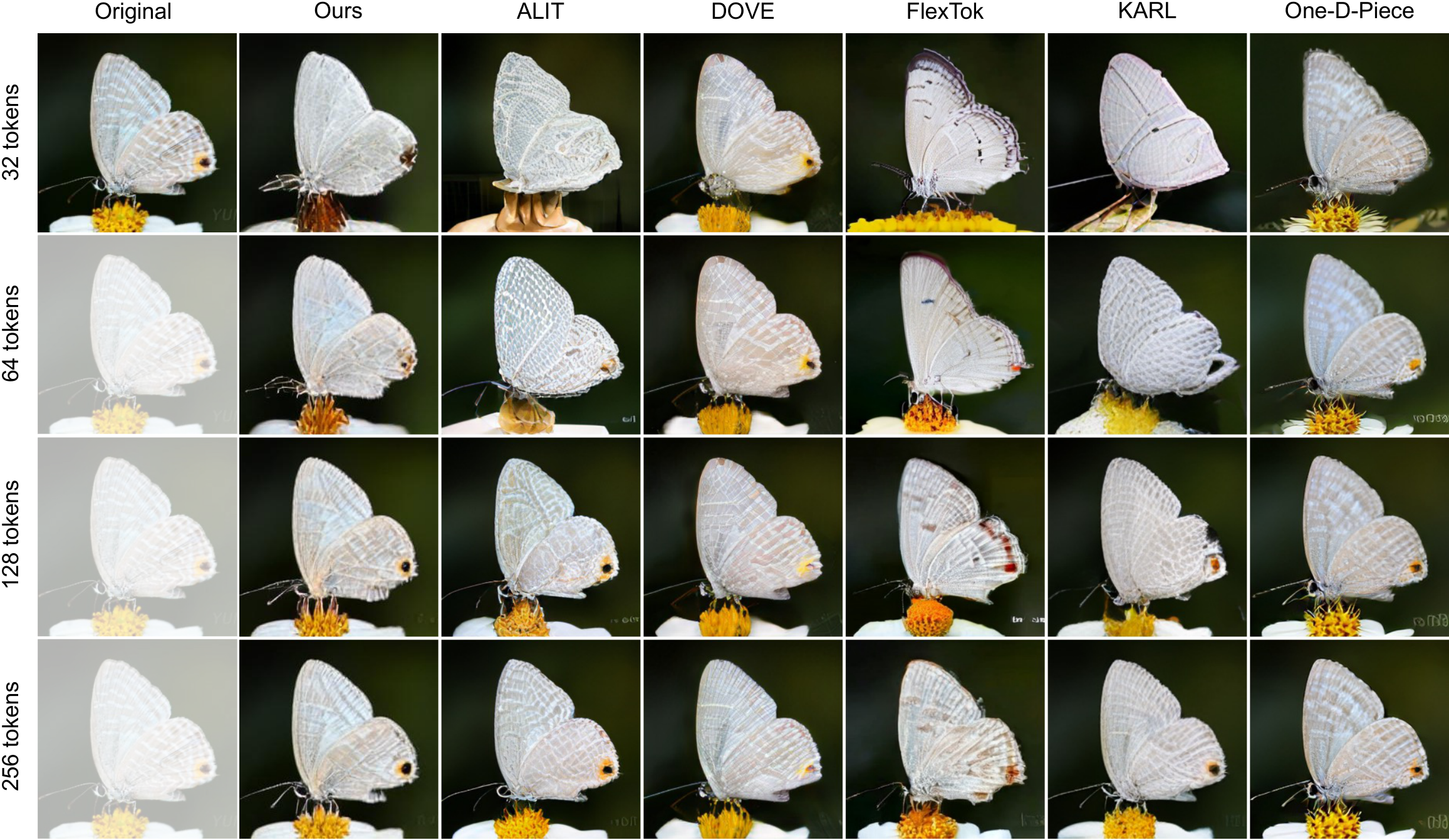

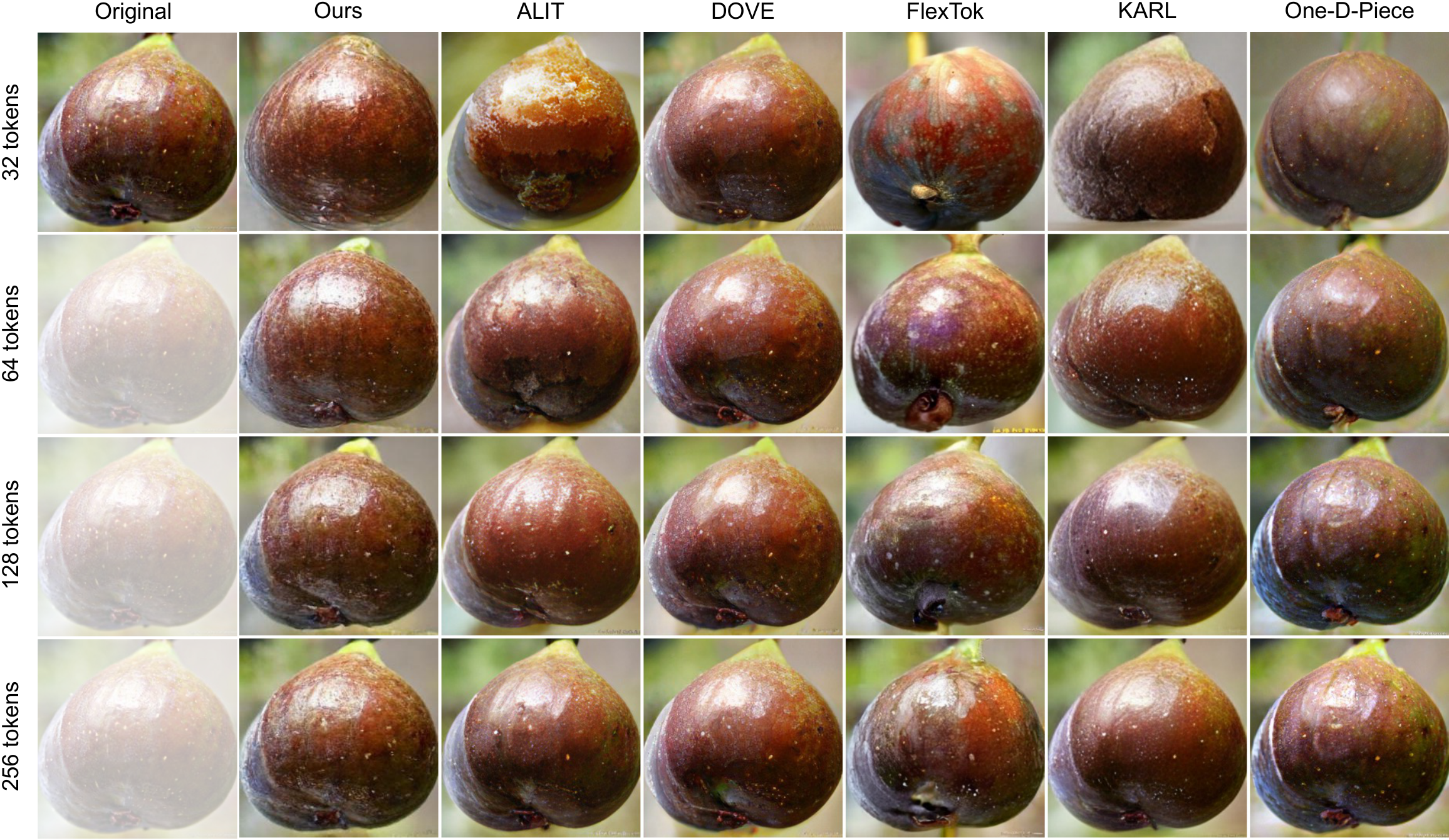

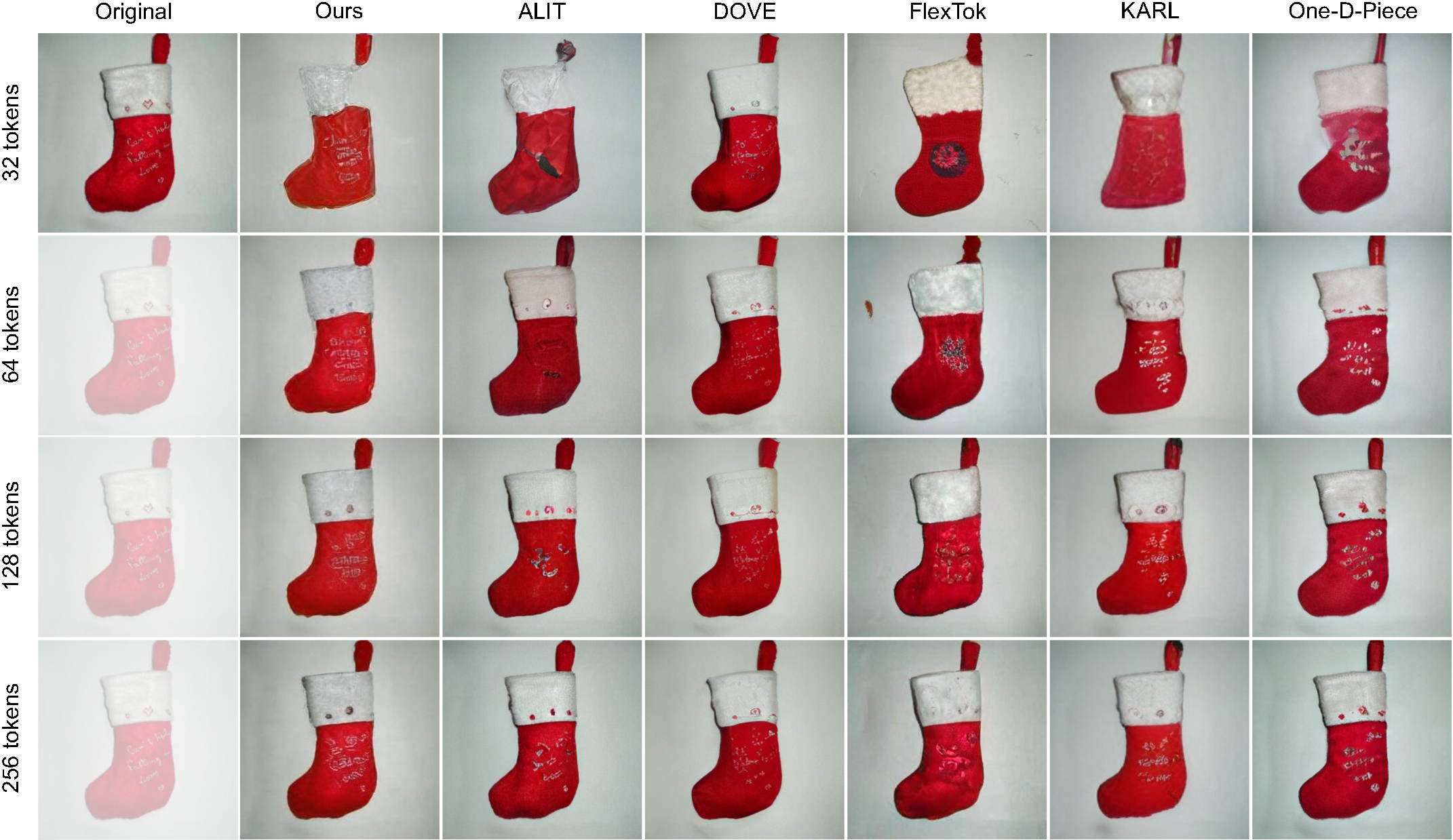

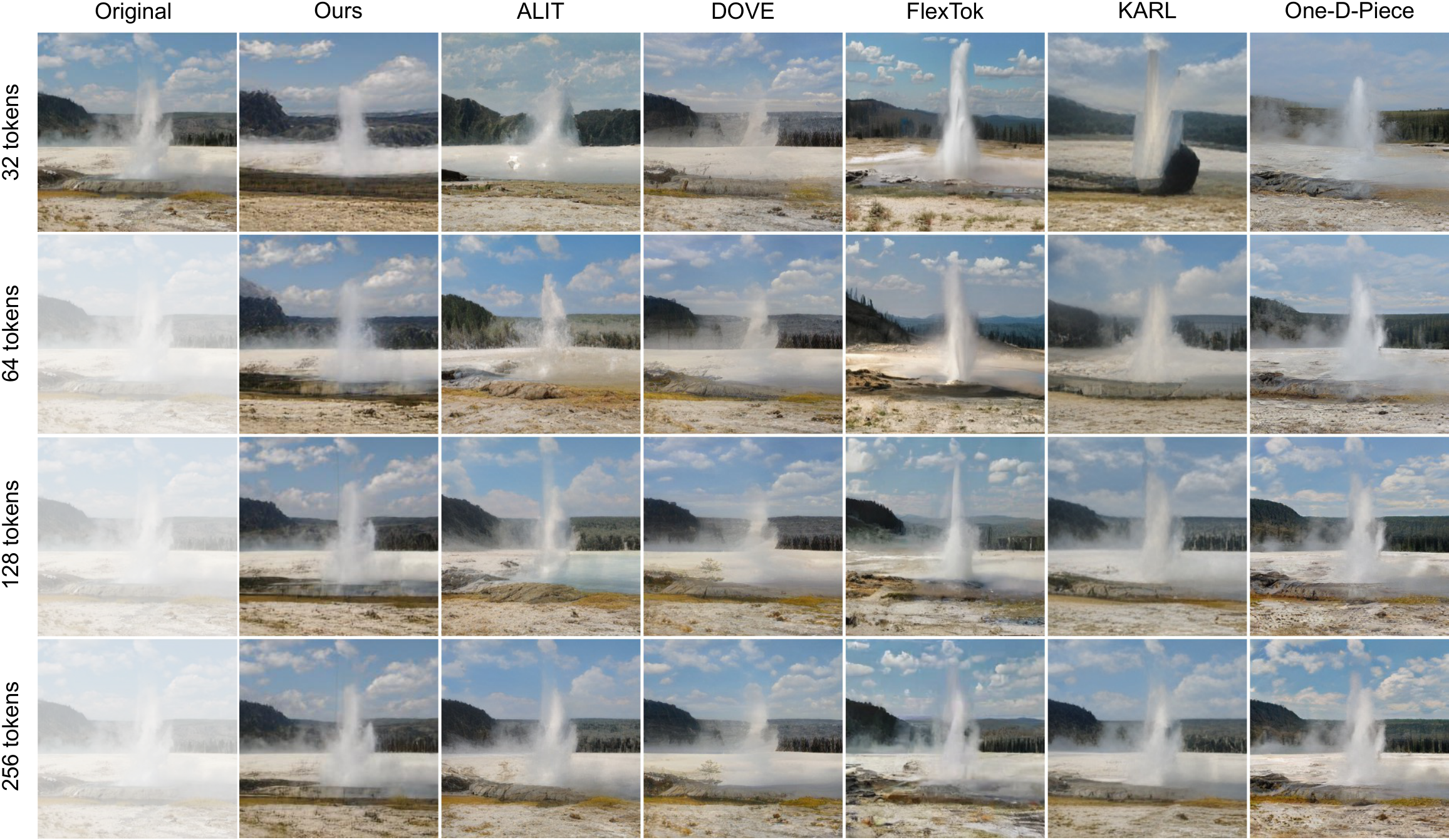

Qualitative comparison vs. state-of-the-art (32–256 tokens).

Reconstructions from ChannelTok and prior flexible tokenizers (OneDPiece, DOVE, ALIT, KARL, FlexTok) alongside the original. Our method maintains colour consistency, sharpness, and structural fidelity across all token budgets.

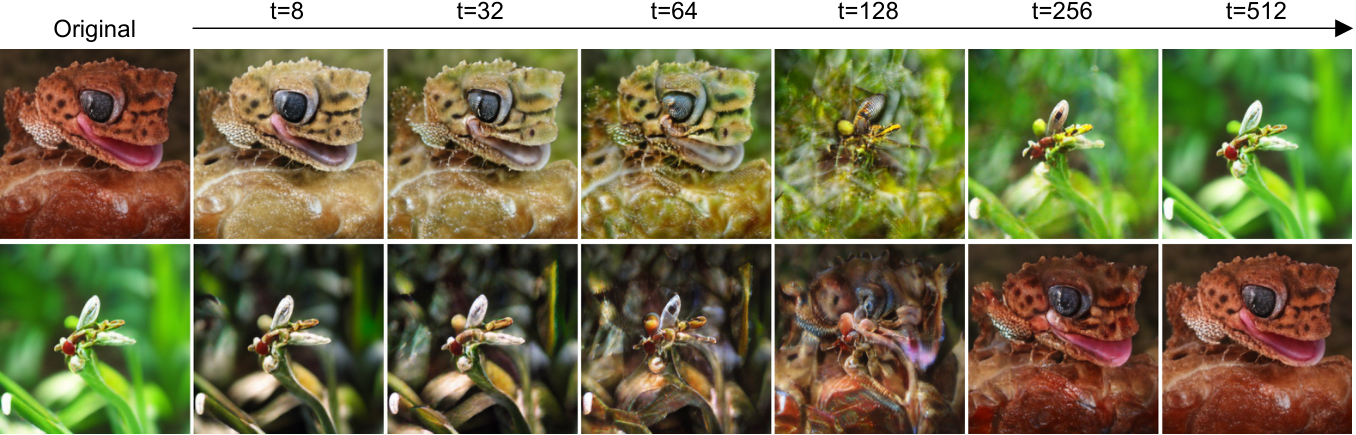

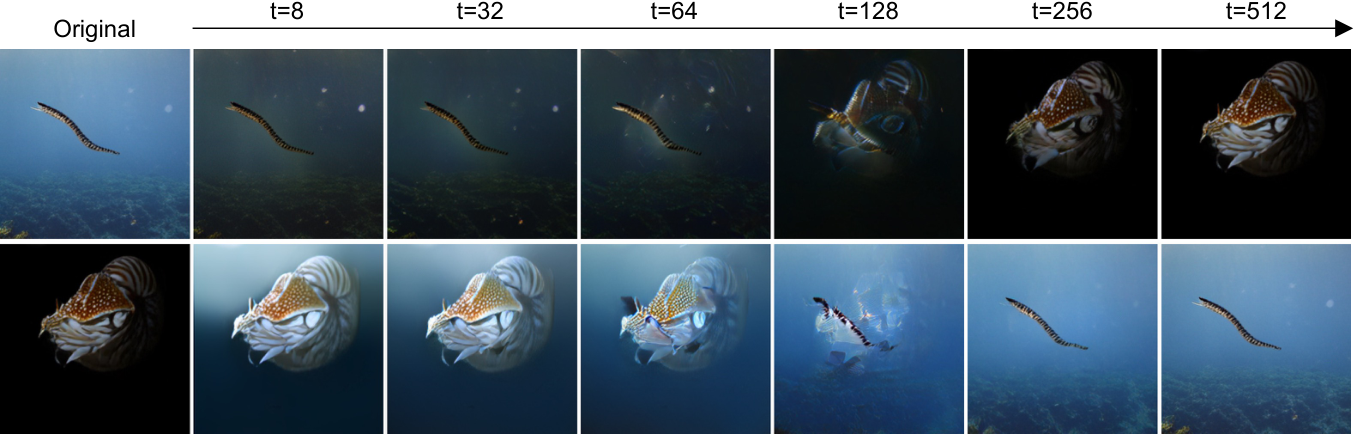

Contrasting foreground/background: jellyfish.

Against a dark background, our method preserves colour fidelity and fine tentacle structure even at low token budgets where competing methods wash out or blur detail.

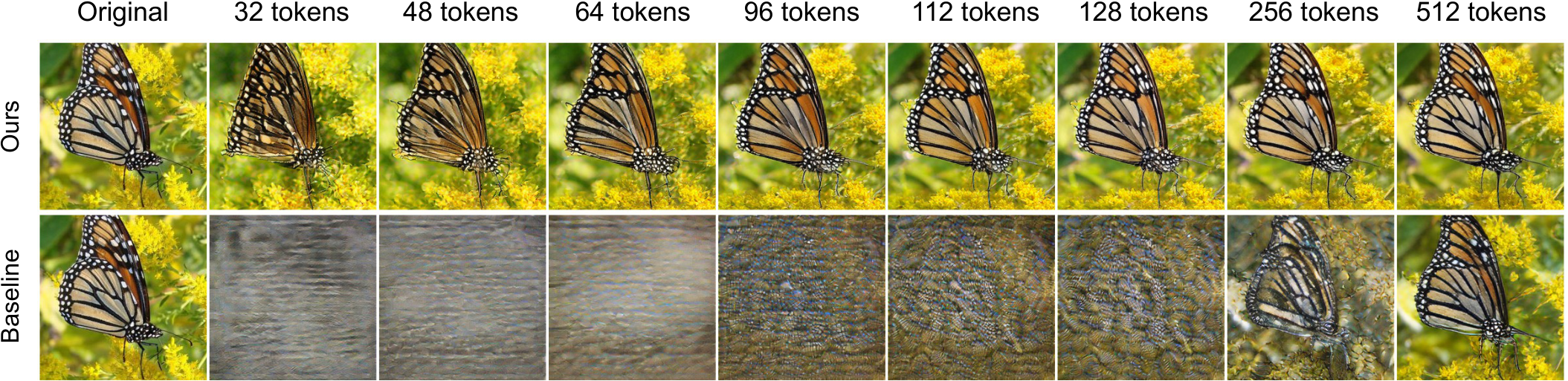

Fine-grained detail: butterfly on a flower.

Subtle wing textures and edge sharpness emerge progressively with increasing tokens. Our method recovers perceptually salient detail earlier than competing methods.

Varied textures: red mushroom with mossy background.

Our method retains fine surface detail and colour fidelity at low token budgets. Competing methods introduce colour artifacts and lose surface texture at 32–64 tokens.

Dark subject with surface sheen: round fruit.

Competing methods introduce colour artifacts and lose surface highlights at low token counts. Our method maintains perceptual consistency and tonal accuracy across all budgets.

Text and vibrant colours: Christmas stocking.

Text legibility is challenging at very low budgets across all methods, but our method begins recovering readable structure by 128 tokens while maintaining colour vibrancy throughout.

Complex natural scene: geyser eruption.

Our method recovers landscape structure and atmospheric details more faithfully than alternatives, even at moderate token budgets, while avoiding the blurring and colour drift seen in other methods.